With the comments from the community, we just generated a new performance evaluation report for HBase 0.20.0. Please refer to following document.

We have been using HBase for around a year in our development and projects, from 0.17.x to 0.19.x. We and all of the community know the serious Performance/Throughput issue of these releases.

Now, the great news is that hbase-0.20.0 will be released soon. Jonathan Gray from Streamy, Ryan Rawson from StumbleUpon and Jean-Daniel Cryans had done a great job to rewrite many codes to enhance the performance. The two presentations [1][2] provide more details of this release.

Following items are very important for us:

- Insert performance: data generated fast.

- Scan performance: for data analysis by MapReduce.

- Random Access performance.

- The HFile (same as SSTable)

- Less memory and I/O overheads

Bellow is our evaluations on hbase-0.20.0 RC1:

Cluster:

- 5 slaves + 1 master

- Slaves (1-4): 4 CPU cores(2.0G), 800GB SATA disks, 8GB RAM. Slave(5): 8 CPU cores(2.0G) 6 disks with RAID1, 4GB RAM

- 1Gbps network, all nodes under the same switch.

- Hadoop-0.20.0, HBase-0.20.0, Zookeeper-3.2.0

We modified the org.apache.hadoop.hbase.PerformanceEvaluation since the code have following problems:

- Is not match for hadoop-0.20.0.

- The approach to split map is not strict. Need provide correct InputSplit and InputFormat classes.

The evaluation programs use MapReduce to do parallel operations against HBase table.

- Total rows: 5,242,850.

- Row size: 1000 bytes for value, and 10 bytes for rowkey.

- Sequential ranges: 50. (also used to define the total number of MapTasks in each evaluation)

- Each Sequential Range rows: 104,857

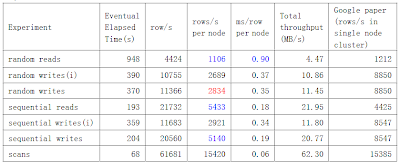

The principle is same as the evaluation programs described in Section 7, Performance Evaluation, of the Google Bigtable paper[3], pages 8-10. Since we have only 5 nodes to work clients, we set mapred.tasktracker.map.tasks.maximum=3 to avoid client side bottleneck.

randomWrite (init) and sequentialWrite (init) are evaluations against a new table. Since there is only one RegionServer is accessed at the beginning, the performance is not so good. randomWrite and sequentialWrite are evaluations against a existing table that is already distributed on all 5 nodes.

randomWrite (init) and sequentialWrite (init) are evaluations against a new table. Since there is only one RegionServer is accessed at the beginning, the performance is not so good. randomWrite and sequentialWrite are evaluations against a existing table that is already distributed on all 5 nodes.Compares to the metrics in Google Paper (Figure 6): The write and randomRead performance is still not so good, but this result is much better than any previous HBase release, especially the randomRead. We even got better result than the paper on sequentialRead and scan evaluations. (and we should be aware of that the paper was published in 2006). This result gives us confidence.

- The new HFile should be the major success.

- BlockCache provide more performance to sequentialRead and scan.

- Client side write-buffer accelerates the sequentialWrite, but not so distinct. Since each write operation always writes into commit-log file and memstore.

- randomRead performance is not good enough, maybe bloom filter shall enhance it in the future.

- scan is so fast, MapReduce analysis on HBase table will be efficient.

Looking forward to and researching following features:

- Bloom Filter to accelerate randomRead.

- Bulk-load.

We need do more analysis for this evaluation and read code detail. Here is our PerformanceEvaluation code: http://dl.getdropbox.com/u/24074/code/PerformanceEvaluation.java

References:

[1] Ryan Rawson’s Presentation on NOSQL. http://blog.oskarsson.nu/2009/06/nosql-debrief.html

[2] HBase goes Realtime, http://wiki.apache.org/hadoop-data/attachments/HBase(2f)HBasePresentations/attachments/HBase_Goes_Realtime.pdf

[3] Google, Bigtable: A Distributed Storage System for Structured Data http://labs.google.com/papers/bigtable.html

Anty Rao and Schubert Zhang

Thanks for posting Anty and Schubert. How many CPUs in your nodes? Looks like hbase is faster than the BigTable paper scanning and doing sequential reads (Am I reading that right)? Somethings up with our writes at the moment. Need to look into it. We seem to have lost speed here since 0.19. Good work!

ReplyDeleteI Suggest You Online Chat Room There You Can Chat With girls and Boys Without Registration.» Pakistani Chat Room

Delete» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

» Girls chat room

» BOYS Chat Room

» Adult Chat Room

» Flirt Chat Room

You are showing ~2ms for random reads per node? Is that right?

ReplyDeleteThat's about as good as we can get on your hardware. Bloom filters will only help in the case of a miss, not a hit. Besides that, you're already showing 2-4X better performance than a disk seek. Any other improvement will have to come from HDFS optimizations, RPC optimizations, and of course you can always get better performance by loading up with more RAM for the filesystem cache. Try 8GB or 16GB in your nodes and you might get sub-ms on average per node, but remember, you're serving out of memory then and not seeking. Adding more memory (and regionserver heap) should help the numbers across the board.

The BigTable paper shows 1212 random reads per second on a single node... That's sub-ms for random access, clearly not actually doing disk seeks for most gets.

See HBASE-1771 Anty and Schubert. Sequential/Random writes should go up by factors of 2-4.

ReplyDelete@stack:

ReplyDeleteSlaves(1-4): 4 CPU cores(2.0G), Slave(5): 8 CPU cores(2.0G). I have just updated the post to add CPU info.

We will modify conf according to HBASE-1771, and post the new result. It seems great. Thanks stack.

@J-G:

Yes, ~2ms per row and per node for random reads, it is a eventually average. It is less than the 10ms of disk seek, should be profit from cache and the HFile implementation. Now, I only assign 2GB heap to each region-server. Maybe RAID0 on multiple disks can help.

And I just think more about bloom filters, your are right, no help in these evaluations.

After our retest, we found HBASE-1771 does not improve the performance. So, it seems that's about as good as we can get on our hardware.

ReplyDeleteAnother issue is about random reads.

I use sequentical-writes to insert 5GB of data in our HBase table from empty, and ~30 regions are generated. Then the random-reads takes about 30 minutes to complete. And then, I run the sequentical-writes again. Thus, another version of each cell are inserted, thus ~60 regions are generated. But, we I ran the random-reads again to this table, it always take long time (more than 2 hours). And when the data in table are inserted by random-writes, the random-reads is also slow.

@Schubert So, your machines resemble those described in the BigTable paper? On the random-read test, now its 4X longer so its average of 8ms a read? My guess is that you are missing the cache more often now. What if you ran a major compaction on the table (Check logs to see it completes). Does time change much?

ReplyDelete@stack, yes. we have fix this random-read issue, it is caused by one ineffective node.

ReplyDeleteanyone can get the test code from https://issues.apache.org/jira/browse/HBASE-1778

Bradford Stephens to hbase-user Sep 23 (5 days ago)

ReplyDeleteA quick performance snapshot: I believe with our cluster of 18 nodes (8

cores, 8 GB RAM, 2 x 500 GB drives per node), we were inserting rows of

about 5-10kb at the rate of 180,000 /second. That's on a completely untuned

cluster. You could see much better performance with proper tweaking and LZO

compression.

Can't print this presentation from SlideShare, will it be publicly available in any other form?

ReplyDelete@Igor Katkov,

ReplyDeleteNow, you can download the document from slideshare.

Hbase provides Bigtable-like capabilities on top of Hadoop and HDFS.

ReplyDeleteThank you for awesome writeup. It if truth be told used to be an amusement account it. Glance complex to more brought agreeable from you! Also Check: YoWhatsApp Apk & WhatsApp Groups.

ReplyDeleteThis is a very useful content We also published Aptitude and share your feedback

ReplyDeletenice post thanks for sharing this

ReplyDeletealso read 18+ Hot Girl Whatsapp Group Link

Techieflow

ReplyDelete

ReplyDeleteI just take the 7 habits workshop and personal effectiveness training check out here

This comment has been removed by the author.

ReplyDeletewhatsapp group status

ReplyDeletewhatsapp status for group

whatsapp status group link

Whatsapp Group link

ReplyDeleteMoviesda

ReplyDeleteMoviesda

ReplyDeleteThanks for sharing this article. For more Online Shopping Offers Whatsapp Groups you can visit

ReplyDeleteWhatsapp groups links

Whatsapp groups Invite links

Whatsapp groups Jion links

Whatsapp groups list

Whatsapp groups

telegram channels

ReplyDeletetelegram group links

telegram channels list

telegram join channel

online shopping telegram channels

bestapkdownloads

ReplyDeletehttps://bestapkdownloads.com/

king root app download

bestapkdownloads

https://bestapkdownloads.com/

king root app download

Great Post

ReplyDeleteKeep it up

Whatsapp Group Link India

great content and if you guys look way to log whatsapp web

ReplyDeletevia pc then follow the linked page.

whatsgroups WhatsApp Group Join Link is a WhatsApp group link directory where you will find many types of WhatsApp group link info

ReplyDeleterails c production

ReplyDeleteproduction department

production designer

production definition

production design meaning

we always prefer this site for Indian food online http://royalepunjab.net.au/

ReplyDeleteAroma Indian Cuisine is the best Indian Restaurant Epping. We have the best Indian food around and an atmosphere to match. Our recipes are authentic and time-tested on http://aromaindian.com.au/

ReplyDeletePyar Bhari Shayari

ReplyDeleteShayari

Dard Bhari Shayari

Best Shayari

very good and relatable..Latest Job ALerts

ReplyDeleteYOWhatsapp is one of the best Whatsapp mods available for Android phones.

ReplyDeletereally very nice artical

ReplyDeleteNice inforaiton about HBase-0.20.0 Performance Evaluation

ReplyDelete

ReplyDeleteMahadev Quotes

कम्पटीशन शायरी इन हिंदी

लड़कियों को जलाने वाली शायरी

दमदार स्टेटस इन हिंदी

ताने मारने वाले स्टेटस

वजनदार स्टेटस

Thank You for sharing the information. Besides, Big Data Engineering are responsible for designing big data solutions and have experience with Hadoop-based technologies such as MapReduce, Hive, MongoDB, Cassandra, Omegle Webcam.

ReplyDeleteIt have considerable knowledge of Java and have extensive coding experience in general purpose and high-level programming languages such as Python, SQL, Scala, Online Chat.

Omegle Online

ReplyDeleteBazoocam.org

ometv

Dirtyroulette

Ludo King is Indian Board Game That Played By Anyone Who Want, Here Some Vip features of Ludo King Apk That User Want to Use Then Here Download Link of Ludo King Modified Application

ReplyDeleteNew Gujarati ringtone

ReplyDeletekrishna flute ringtone

Rajasthani ringtone

New Gujarati ringtone

Download Telugu Ringtone Mp3 Telugu Ringtones Download New Mp3 Ringtone Download

ReplyDeletehello readers

ReplyDeletewelcome to our insta daily stuff. here we provide mahadev shayari

दुश्मन बनकर मुझसे जीतने चला था नादान

मेरे महाकाल से मोहब्बत कर लेता

तो मै खुद हार जाता

**जय महाकाल**

welcome to our Insta daily stuff

Great content and Thanks for share with us this valuable

ReplyDeleteThanks for sharing ! Also check

access tcs email

AlloTalk online

BT Sport Login

hotmail

Download Tamil Ringtones for free https://www.ringtonefly.co/telugu-ringtones/

ReplyDeleteBest Breakup Quotes

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteSad Quotes

ReplyDeleteSafd na

ReplyDeleteDear sir doStatus

ReplyDeleteZaroori Tha Lyrics

ReplyDeleteInspiration good Morning Message

ReplyDeleteBest Inspiring Quotes in Hindi

Best Inspirational Good Morning Quotes

Best Inspiring Good Morning Message

ReplyDeleteBest Inspiring Quotes in Marathi

Lyrics of Vaishnava Janota

ReplyDelete3D Rendering China provides many services for a purpose like 3d Industrial modeling,3d Architectural Rendering, 3d Medical Illustration,3d Product modeling and 3d design, 3d Interior Visualisation, 3d Animation Video etc if you need design home, office and industry we make 3D design 100% unique and high quality professional creative idea and special concentrate for finishing, We 3D architectural renders supportive and trusted services.

3d rendering china

3d architectural rendering china

3d interior designs china

3d product rendering china

Good Night Quotes in Marathi

ReplyDeleteGood Morning Quotes in Kannada

Lyrics of Excuses

Despacito Lyrics Ft. Justin Bieber

IG Captions for Girls

IG Captions in Marathi

Good Morning Message to inspire you

ReplyDeleteHanuman Chalisa Lyrics in Malayalam

amreli marketyard price

ReplyDeletetalati book list

gujarat history book

Abhayam Payo Book Pdf

Yuva Upnishad Gujarat Na Jilla Book Pdf Download

gujarat ni nadio

best information

talati mantri